Artificial Intelligence PACs Aim for Invincibility and Zero Safeguards

"There's no question that what the big tech companies are doing is very bad," stated Trump. Then why have we signed an executive order removing safeguards for these companies on AI, Mr. President? In December of 2025, Donald J. Trump signed an executive order to implement minimally burdensome policies to regulate AI. The order bars any meaningful policy to be passed without constant rejection and scrutiny from AI leaders.

To further this aggression, AI companies are subpoenaing AI Watchdog organizations. AI Watchdog organizations are regulatory groups that aim to monitor, audit, and evaluate AI systems to ensure they are safe, transparent, and legally compliant.

For example, The Midas Project tracks policy changes and violations across major companies and governments.

Tyler Johnston, the Executive Director at The Midas Project, was subpoenaed by OpenAI.

Three major PAC's blocking AI regulation and their respective company backings are:

- Technet: Alphabet, Anthropic, OpenAI, and

- Chamber of Progress: OpenAI and a16z

- American Innovators Network: a16z and Y combinator

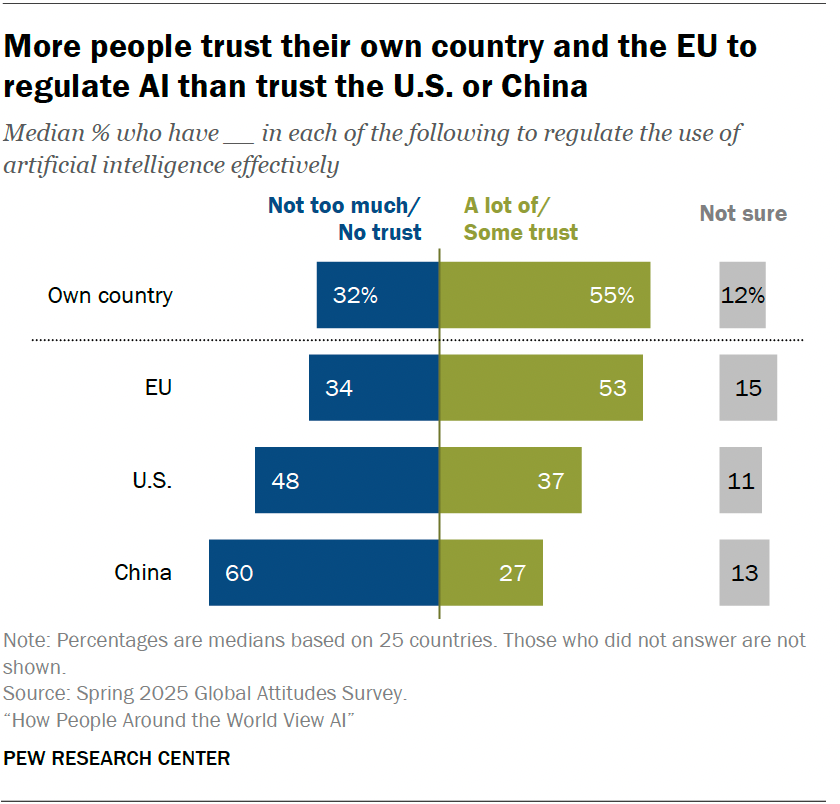

According to Pew Research Center, 48% of Americans hold little to no trust regarding their belief in their county regulating the use of AI effectively. Additionally, 12% are unsure about their trust regarding AI.

The numbers show that the majority of individuals have concerns regarding AI. Individuals have the right to be concerned when xAI, Anthropic, Google Gemeni, Grok and others violate corporate AI safety policies on a semi-monthly basis as shown in the Midas Project.

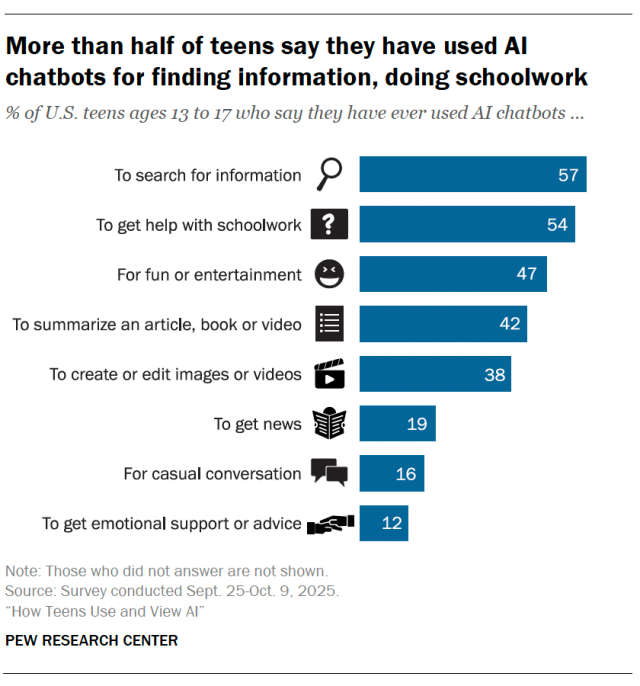

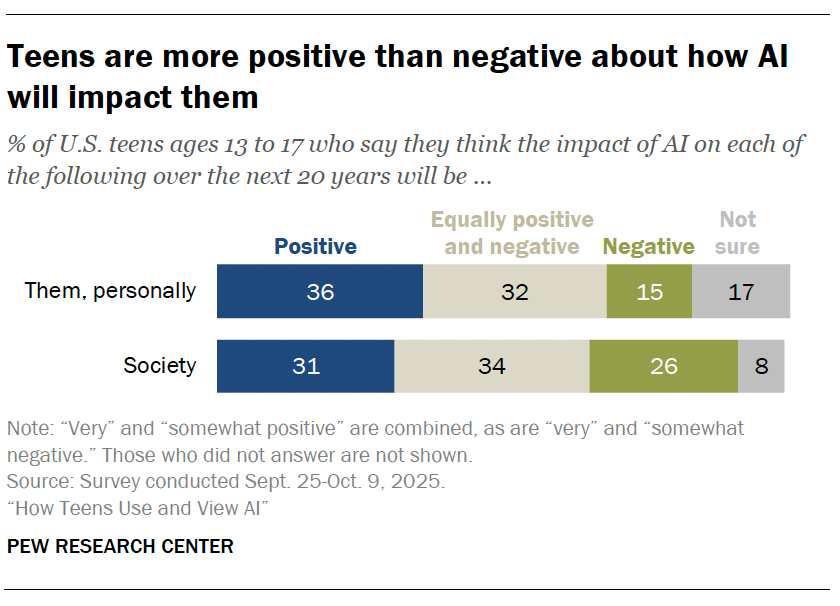

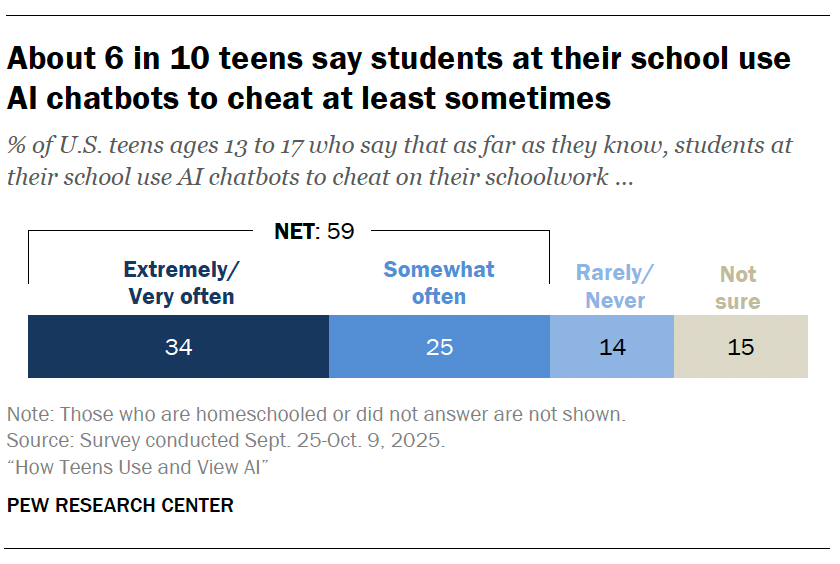

To contrast this, children, being a vulnerable population, offer a different take on AI.

The positivity likely stems from the idea that the tool breaks through the discomfort of growth and assists them in cheating for their assignments.

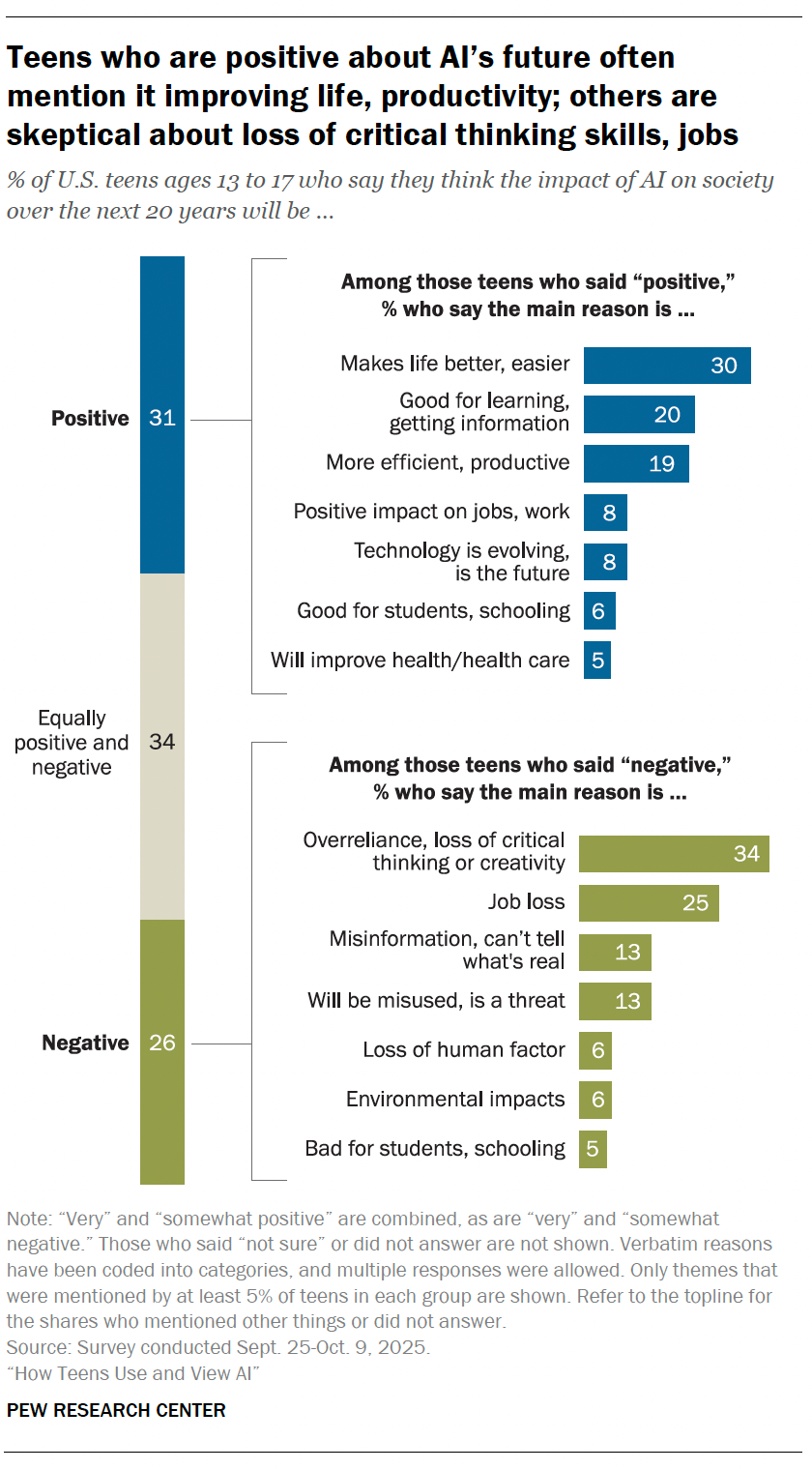

However, at least for some children, they agree that the loss of critical thinking skills is something worth noting.

Children's environmental factors and potential negative outcomes for schools can set our generations up for failure in fields where critical thinking and creativity is mandatory.

Furthermore, vulnerable populations confer to AI as if it were a therapist or counselor. Children use AI to obtain emotional support or advice.